The Day the Open Web Officially Died

On March 14, 2026, Kevin Rose and Alexis Ohanian announced that Digg, the social news site they had relaunched with great fanfare just two months earlier, was closing its open beta. The reason was not lack of interest. The reason was that they could not keep the bots out. "An unprecedented bot problem," the announcement called it. Let that sit for a second. Two of the most technically sophisticated founders in Silicon Valley, running a platform built from scratch in 2026 with every lesson they had learned from the past twenty years, could not launch a public beta without being overrun by automated accounts. If these two can't do it, nobody can. And that was the moment it stopped being reasonable to call the Dead Internet Theory a theory.

The thing is, Ohanian had already said it out loud the previous October. At TechCrunch Disrupt he told Rose, plainly and without hedging, "the dead internet theory is real." This is one of the founders of Reddit. A man whose net worth was built on the premise that human beings congregating online produces something valuable. And he was telling anyone who would listen that the promise he sold was no longer true. The Digg failure was not a surprise. It was a confession.

So if the internet we remember is gone, what replaced it? And more importantly, when exactly did it die? The answer is more specific than most people realize. What died was not the internet. What died was the open web. And the autopsy is worth doing properly.

1969 to 1995: Building Something Nobody Owned

The first message ever sent on ARPANET, the Pentagon-funded research network that became the internet, was supposed to be the word "LOGIN." The system crashed after the first two letters. On October 29, 1969, a student at UCLA named Charley Kline typed "L" then "O" and then everything went dark. That's how it started. A crash, two letters, a phone call to Stanford to confirm that yes, those two letters had arrived. Nobody thought they were building anything historic. They were just trying to log in.

For the next twenty years, the internet was a weird academic hobby. Email worked. Bulletin boards worked. Usenet worked. There was no World Wide Web yet. No browsers, no images, no commerce. In 1989, a British physicist working at CERN in Switzerland named Tim Berners-Lee wrote a proposal titled "Information Management: A Proposal" that his boss famously described as "vague but exciting." That proposal became the World Wide Web. Berners-Lee gave it away. He did not patent it, did not charge for it, did not incorporate. He released HTTP, HTML, and URLs into the public domain and handed the future of human communication to anyone who wanted to use it. This is not how things work anymore. This is how things worked then, and it is why the early web was what it was.

The period from 1993, when Mosaic launched, to roughly 1999 is the most genuinely open the internet ever was. Anyone could publish. You wrote HTML by hand, uploaded it to a server, and it appeared on the public internet. There was no algorithm deciding who saw it. There was no corporate intermediary. Search engines like AltaVista and Yahoo tried to index this sprawling mess, but the mess itself was the point. Every website was its own small nation. GeoCities had neighborhoods. Angelfire had users with names. You followed links from page to page, not because an engagement algorithm wanted you to, but because the author of one page had decided the author of another page was worth reading. This is what the open web means. It is not a feeling. It is a technical and economic fact.

1999 to 2008: The Dot-com Crash and the First Consolidation

The dot-com crash in March 2000 wiped out hundreds of companies and trillions of dollars of market value. What is less appreciated is that it also wiped out a certain kind of ambition. Before the crash, companies were being built with the assumption that the web would remain open. Sites linked to each other. APIs were free. Data flowed. After the crash, the survivors learned a different lesson: the open web does not produce durable profits. What produces durable profits is owning something people cannot leave.

This is where the second consolidation begins. Google, founded in 1998, had already won the search war by 2002. Facebook opened to the public in 2006. YouTube was acquired by Google the same year. Twitter launched in 2006. Amazon, which had been a bookstore, began its long metastasis into a platform that sold everything and owned the shelf space. Each of these companies started by being extraordinarily good to its users. This is the first stage of what Cory Doctorow would eventually call "enshittification." Facebook in 2008 was a marvel. It showed you posts from your friends in chronological order. It did not inject ads. It did not boost strangers. It was, genuinely, a product built for its users. So was Google search. So was YouTube. So was Amazon.

They had to be. Users had alternatives. You could leave Facebook for MySpace. You could search with AltaVista instead of Google. Competition worked because it existed. Regulation worked because the government still enforced antitrust law in memory, if not always in practice. Interoperability worked because the open standards of the 1990s were still dominant. And workers worked, meaning skilled engineers still believed they were building something that mattered and could push back against executive decisions they disagreed with. All four of these disciplines, Doctorow argues in his 2025 book Enshittification: Why Everything Suddenly Got Worse and What To Do About It, were what kept platforms honest.

One by one, between roughly 2005 and 2015, those disciplines were dismantled. Competition died through acquisitions. Facebook bought Instagram in 2012 and WhatsApp in 2014. Google bought YouTube, Android, DoubleClick, Waze, and dozens more. Regulation died through "consumer welfare" doctrine, the neoliberal idea that monopolies were fine as long as prices were low. Interoperability died as platforms locked down their APIs and sued anyone who tried to build alternative clients. And worker power died, slowly at first and then all at once, when the tech layoffs of 2022 and 2023 eliminated the leverage that skilled engineers once had.

2008 to 2016: The Golden Handcuffs Era

By 2010, most people's internet experience was mediated by a handful of platforms. You checked Facebook, you searched Google, you watched YouTube, you shopped on Amazon. The platforms were still mostly good. This was the golden handcuffs era. Locked in, but not yet abused. The abuse came slowly, and it came in a specific pattern that Doctorow's three-stage model describes with disturbing precision.

Stage one: be good to users. Lock them in. Stage two: abuse users to make things better for business customers. Facebook started showing you less of what your friends posted and more of what publishers paid to promote. Google search started inserting more ads above the organic results, then more, then a full screen of them. YouTube started showing pre-roll ads, mid-roll ads, then multiple mid-roll ads. These were choices. They were not inevitable features of the technology. They were decisions made by specific people, in specific meetings, to extract more value from a user base that could no longer leave. Stage three: abuse the business customers too. Publishers, advertisers, sellers, creators, all squeezed dry, with the platforms taking an ever-larger share of the revenue that passed through them. By 2020, a small business advertising on Facebook was paying more for worse results. An author selling on Amazon was surrendering a bigger cut. A YouTuber was being demonetized for reasons that were never explained.

Here is the thing about enshittification that makes it different from ordinary corporate greed. Software can be changed instantly. A physical business that starts cheating its customers develops a reputation over time. The change is visible. With software, the business logic can be adjusted overnight, for every user, and nobody can see what was changed. Doctorow calls this "twiddling." Facebook could decide on a Tuesday that publishers who included full article links would have their reach cut by 70 percent, implement the change by Wednesday, and have every news publisher in the world scrambling to comply by Thursday. The asymmetry of power here is almost total.

2016 to 2022: The Bots Arrive, Nobody Notices

The Dead Internet Theory first appeared on a forum called Agora Road's Macintosh Cafe in January 2021, though references to similar ideas existed on 4chan as early as 2016. The theory claimed, in its original paranoid form, that most of the internet was now bots, that the US government was running a mass psychological operation, and that what you were reading was artificially generated content designed to influence your behavior. For years, this was treated as fringe conspiracy thinking. It had all the markers: anonymous posters, grand claims, an invisible enemy, a date of death (the theory asserted the internet died in 2016 or 2017). It was easy to dismiss.

Except the measurable parts kept turning out to be right. In 2016, the security firm Imperva reported that 52 percent of all internet traffic was bots. In 2022, the figure was roughly 47 percent according to the same firm's annual Bad Bot Report. By 2024, Imperva's data showed bot traffic had surpassed human traffic for the first time in a decade, reaching 49.6 percent bad bots alone and pushing total automated traffic above half. The human internet was now a minority of internet activity. This was not a conspiracy. This was published data that anyone could look up. The problem was that the dominant framing of the Dead Internet Theory, with its US government mind control element, gave serious people an excuse to ignore the boring measurable parts.

A 2019 study published in 2019 found that bot-generated posts heavily contributed to public discussion around mass shooting events in the United States, amplifying and distorting narratives that real humans then internalized as real reactions from real people. Large-scale pro-Russian disinformation campaigns targeting Ukraine used bot networks to flood social media with fabricated testimonials. The 2024 US election cycle saw AI-generated deepfake images circulating as supposed evidence of demographic political support that never existed. The platforms knew. They had internal metrics. They chose not to clean it up aggressively because aggressive cleanup would have revealed how much of their engagement data was fake, which would have collapsed their advertising business.

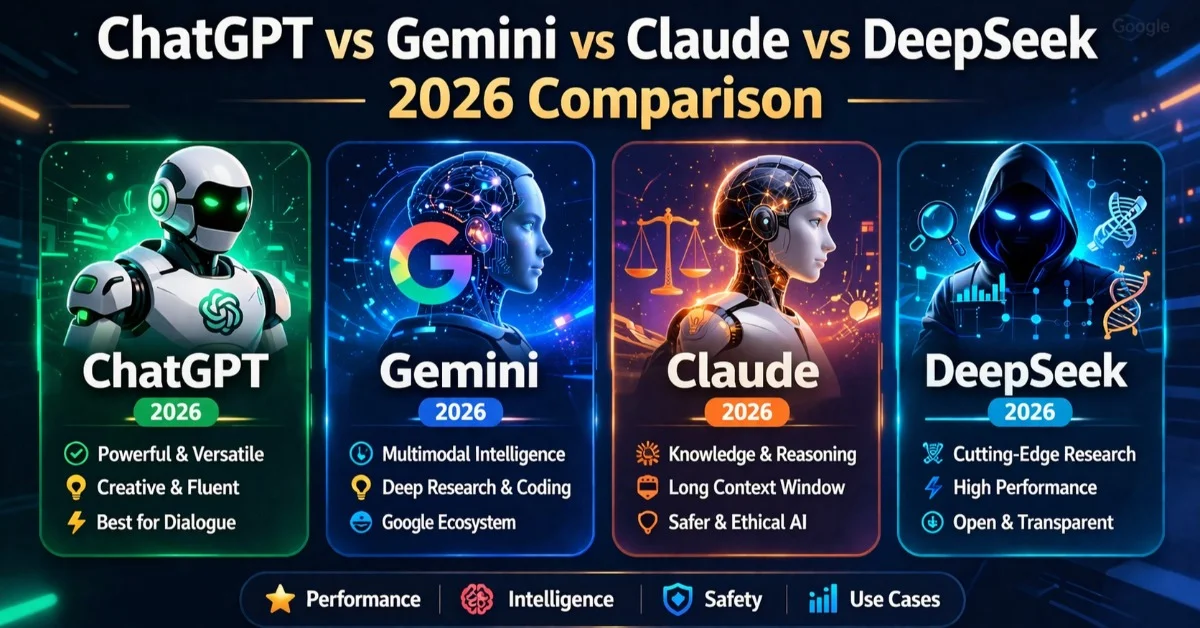

2022 to Now: The AI Flood

Then ChatGPT launched in November 2022, and the slow poisoning of the web became a flood. The economics changed in a way that nobody in the platform companies seems to have fully planned for. Producing a thousand articles of reasonably coherent English-language text used to require a hundred writers working for a month. By early 2023, it required one operator, one API key, and an afternoon. The marginal cost of content production collapsed to approximately zero.

The result is what the industry, half-jokingly, calls AI slop. Facebook groups filled with images of Jesus fused with shrimp, or soldiers with impossibly elaborate sandcastle creations, generated by AI, captioned with emotional hooks, engineered to farm engagement from people who may not realize the image is fake. Some of these posts have garnered more than 20,000 likes. The accounts posting them are often bots. The accounts commenting on them are often bots. Real humans occasionally wander through this simulation and their reactions feed the algorithm, which shows more of it to more humans, who then see bots responding to bots and assume this is what the internet looks like now. Because, at this point, it is.

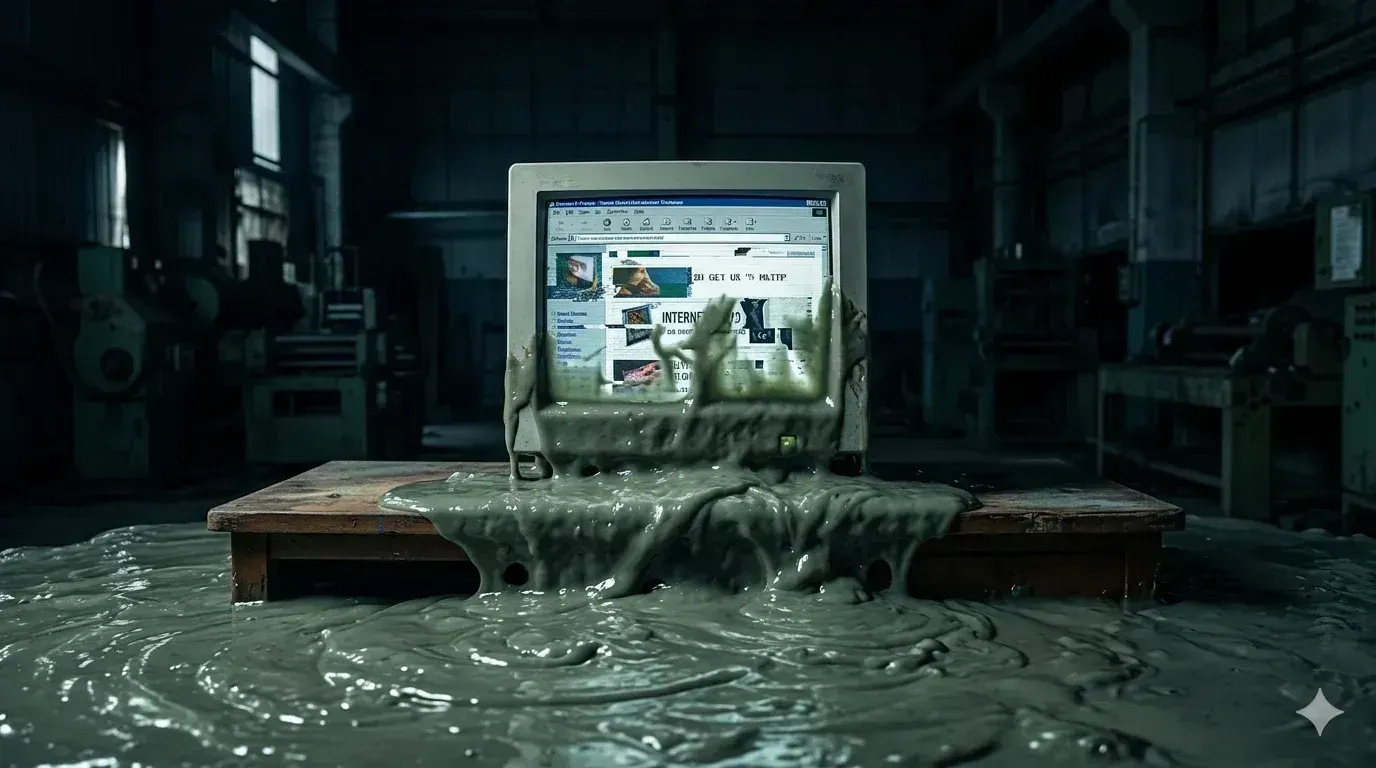

Search engines have been hit worst. Google's search results in 2026 are, by any honest measure, worse than they were in 2016. The top results for almost any query are now AI-generated articles, recipe blogs stuffed with regurgitated filler before the actual recipe, product review sites that have never handled a single product, and medical information pages written by language models that cannot distinguish between a cold and congestive heart failure. Google's own AI Overviews feature, rolled out in 2024 and heavily promoted since, pulls from these same AI-generated sources and synthesizes them into answers that have, on multiple documented occasions, told users to eat rocks or put glue on pizza. The feedback loop is complete. AI generates the content, AI summarizes the content, AI ranks the AI summaries of the AI content, and you get the results.

Amazon has been battling AI-generated book spam on Kindle Unlimited for two years with limited success. Academic publishers are routinely catching papers with phrases like "as of my last knowledge update" left in by authors who copied ChatGPT output directly into their manuscripts. LinkedIn is drowning in AI-generated motivational content and fake thought leadership. Product reviews, restaurant recommendations, Yelp entries, all increasingly synthetic, with the detection tools running years behind the generation tools and often producing false positives that punish the remaining human writers while letting sophisticated AI through.

What the Dead Internet Theory Got Right and Wrong

The original Dead Internet Theory was wrong about the conspiracy. There is no secret US government program coordinating a fake internet. What there is is something almost worse, because it is more ordinary and harder to fix. There is a market. A market for cheap content, a market for engagement, a market for advertising impressions, and a set of platforms that have no economic incentive to distinguish between real and fake because their revenue depends on volume rather than authenticity. The Dead Internet Theory did not predict a conspiracy. It predicted a market failure. And the market duly failed.

What the theory got right is almost everything else. The internet is mostly automated now. The majority of traffic is not human. A large share of content is machine-generated. Most of the engagement metrics that drive platform decisions are inflated by bots. The Turing Test, which AI researchers spent half a century trying to pass, has been passed casually and at scale by systems that nobody bothered to verify. In a 2025 interview with Time, the linguist Adam Aleksic put it bluntly: the theory "used to be a lunatic fringe conspiracy theory, but it's looking a lot more real." Aleksic was not wrong. The lunatics were just early.

The Enshittification Endgame

Doctorow's framework is the most useful tool we have for understanding why this happened. He identifies four forces that used to discipline platforms: competition, regulation, interoperability, and labor power. Each of these has been systematically dismantled over the past two decades, and the dismantling was not accidental. It was the result of specific policy choices in Washington, Brussels, and Westminster, often justified by economists whose theories were generously funded by the very companies they were theorizing about. When competition authorities allowed Facebook to buy Instagram, they were told it would increase consumer welfare. When regulators declined to enforce antitrust against Google's acquisitions, they were told the same thing. When Congress declined to pass federal privacy legislation, they were told the same thing. The result is the internet we have now, which almost nobody likes.

The individual user cannot solve this. This is perhaps the most important point. Leaving Facebook does not solve Facebook. Deleting X does not solve X. The problem is structural. WhatsApp's grip on European communication is not something you can break by downloading Signal, because your family and your children's school and your doctor are all on WhatsApp, and they are not going to switch. This is what lock-in means. It is not that platforms are addictive. It is that they are cities, and leaving a city is hard. Doctorow's proposed solutions involve reviving the four disciplines: breaking up monopolies, enforcing interoperability by law so you can take your data and connections with you, restoring labor power so engineers can refuse to build harmful features, and passing regulations that prevent the worst behaviors. None of these are happening quickly. Some of them may never happen at all.

Small Webs, Slow Media, and the Counter-Movement

There is some genuinely good news in the rubble. A counter-movement has emerged, not coordinated but recognizable. Newsletters via services like Substack and Ghost have partially restored direct connection between writers and readers. Personal blogs are being rediscovered by people who remember what they felt like. Smaller communities on platforms like Discord and Mastodon are recreating something closer to the early internet's feel, even if the technology underneath is different. Slow media publications, long-form journalism, and podcast networks that are not owned by the largest platforms are building an alternative economy for human-created content.

These spaces are smaller than the platforms that replaced the open web, and they will probably stay smaller. Network effects are real, and the reason Facebook is Facebook is that everyone is on Facebook. But the existence of thriving small webs means the death of the open web does not mean the death of online culture. It means the centralization of online culture into a small number of corporate-controlled platforms, with independent spaces existing on the margins. This is not the future anyone planned for in 1989. It is the future we got, the one produced by thirty years of choices, and the one we have to figure out how to live in.

What Died, What Lived, What Comes Next

The internet did not die. TikTok has a billion users. Instagram has two billion. YouTube, despite a decade of enshittification, remains the second most visited site in the world. What died is something more specific, and harder to mourn, because it was never owned by anyone. The open web was an accident, a temporary architecture that emerged in the 1990s because the technology to monetize surveillance at scale did not yet exist. Once that technology existed, the open web was doomed. It was not killed by any villain. It was simply outcompeted by a different model that extracted more value per user. The villains, such as they are, are the legislators and economists who declined to defend it when they had the chance.

What comes next is still being written, and the most honest thing anyone can say is that nobody knows. Some combination of regulation, fragmentation, and technological change will produce whatever the 2030s look like online. The AI tools that flooded the web with synthetic content are the same tools that, in different hands, might be able to help rebuild it. Interoperability mandates, if they arrive, would fundamentally shift the balance of power. A successful antitrust case against one of the major platforms would create ripples that could take a decade to play out.

For now, if you ever wondered why the internet feels wrong, the answer is that it is. You are not imagining it. The thing that felt like a village in 1995 became a shopping mall in 2010 and became a bot farm in 2022. Somewhere along the way, the people you were talking to stopped being people. Somewhere along the way, the links you were clicking stopped leading to anywhere real. The 2026 internet is still useful. It is still where your work happens and your family talks and your friends share photos. But it is no longer a public space in any meaningful sense. It is a collection of private enclosures inside which the enclosed are occasionally allowed to speak.

The open web had a thirty-year run. Not bad for an accident.