Last month, a friend who works as a paralegal in a City law firm sent me a message I haven't been able to shake. "Talia," she wrote, "I keep reading that AI can already do 90 percent of what I do. My firm just rolled out a new document review tool. I genuinely don't know what my job looks like in three years." She isn't alone. Over the past few weeks, I've heard nearly identical anxieties from a financial analyst in Leeds, a junior software developer in Manchester, and a marketing copywriter in Bristol. Educated, capable, hard-working people; all of them quietly terrified.

That fear has a real foundation. The pace at which artificial intelligence has embedded itself in professional life is unlike anything most of us have experienced. In 2022, the average professional had never used a large language model. By early 2026, they are woven into daily workflows across finance, law, healthcare, media, and software. Companies are restructuring around them. Some are quietly shrinking headcounts. Others are freezing entry-level hiring entirely. The headlines don't help: "AI to displace 85 million jobs," "Goldman Sachs warns of 300 million roles at risk," "Tech sector posts 40 percent jump in layoffs."

But here's the thing: the real picture is considerably more nuanced than either the panic or the reassurance suggests. Some jobs are genuinely in trouble. Others are becoming more valuable. And the single biggest factor determining which side you land on is not your industry, your salary, or even your education level. It's whether you understand what AI can and cannot actually do, and whether you're willing to act on that understanding.

This piece is not a comfort blanket. Nor is it a doom forecast. It's an honest, data-driven analysis of what is actually happening to the labour market in the UK and US right now, sector by sector, with specific examples and a practical framework for what to do next.

1. The Numbers: What the Data Actually Says

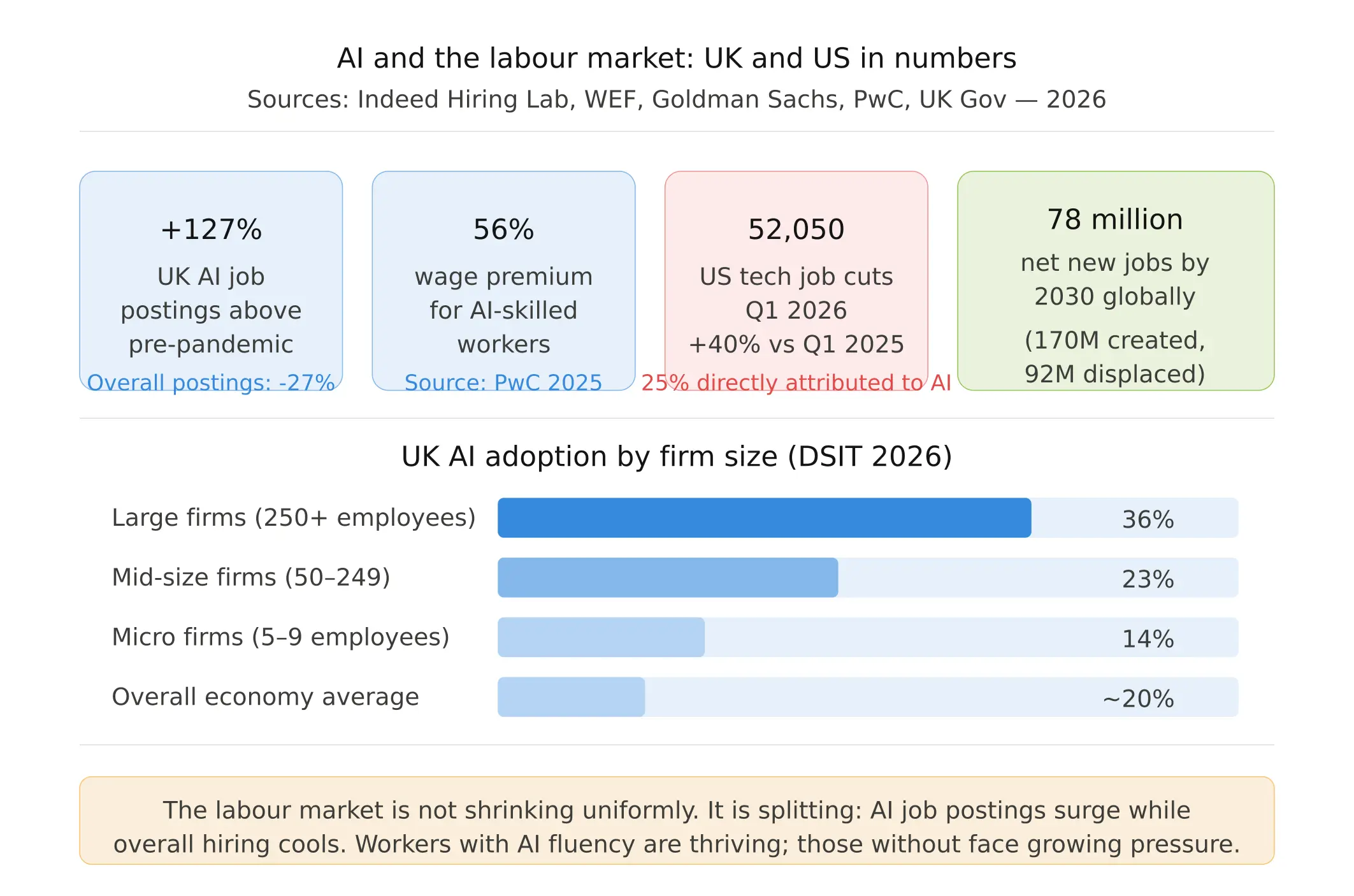

Let's start with the headline figures, because they're both alarming and routinely misread. The World Economic Forum's Future of Jobs Report 2025 surveyed over 1,000 employers representing 14 million workers across 55 economies. It projects that 92 million jobs will be displaced by 2030. That sounds catastrophic. The same report also projects 170 million new jobs will be created, a net gain of 78 million. That part tends to get less coverage.

In the United States, Challenger, Gray & Christmas, the outplacement firm that tracks layoff announcements, reported that in the first quarter of 2026, the US tech sector saw 52,050 job cuts, a 40 percent jump from the same period a year earlier. In March alone, AI was the stated reason for 15,341 of those firings, roughly 25 percent of the total. Just a month earlier, that figure was 10 percent. The AI-attributed share of layoffs more than doubled in a single month.

However, attribution is not causality. Deutsche Bank analysts coined a pointed phrase for this moment: "AI redundancy washing" — the tendency for companies to blame AI for job cuts that may have happened anyway, because it sounds more strategic than admitting to cost-cutting. The CEO of Randstad, the world's largest staffing firm, told CNBC directly that many of the reported job losses are driven by general market uncertainty, not AI adoption.

The UK picture is equally complicated. According to Indeed's Hiring Lab, overall UK job postings in early 2026 sit 27 percent below their pre-pandemic baseline. Wage growth has slowed to a four-year low. The unemployment rate reached 5.2 percent in the three months to January 2026, a post-pandemic high. Yet in that same cooling market, one category is surging: job postings that mention AI have climbed to 127 percent above pre-pandemic levels. The labour market isn't simply shrinking. It is splitting.

The UK government's own assessment, published in January 2026 by the Department for Science, Innovation and Technology, adds an important caveat: only around one in five UK firms currently use or plan to use AI. Within those firms, fewer than one in three employees actually use it. Adoption is real, but it remains concentrated. The disruption is not yet economy-wide; it is intense in specific pockets, and those pockets are expanding fast.

2. Which Jobs Are Actually at Risk? A Sector-by-Sector Breakdown

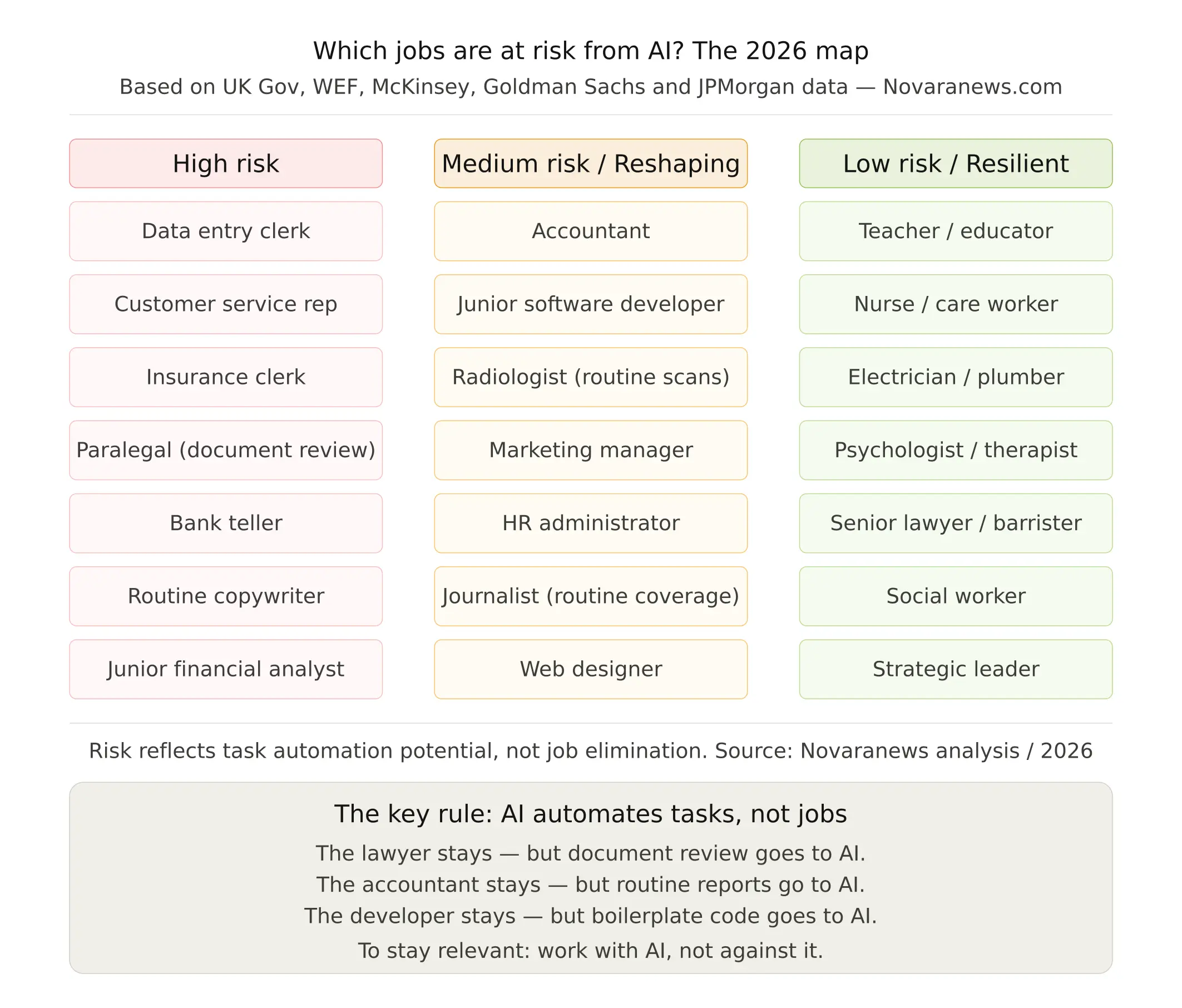

The most important conceptual shift you can make is this: AI is automating tasks, not jobs. A job is a bundle of tasks. The question is what proportion of your job's tasks can be automated, and how quickly. That determines your exposure far more accurately than your job title.

High Exposure: Knowledge Work with Repetitive Cognitive Tasks

The UK government's analysis is unambiguous: finance and insurance is more exposed to AI than any other sector in the British economy. That's because so much financial work, at least at the junior and mid levels, involves structured information processing: reviewing loan applications, reconciling accounts, generating standardised reports, flagging compliance issues. These are tasks that AI systems now perform faster, cheaper, and with fewer errors than humans.

JPMorgan's COIN platform already processes what used to require 360,000 hours of annual human review in seconds. Goldman Sachs reported productivity gains of 15 to 20 percent in its financial workflows in 2025 alone, driven largely by AI-assisted processes. Approximately 200,000 jobs are expected to be cut from Wall Street banks over the next three to five years, according to Bloomberg analysis. As much as 54 percent of banking jobs are considered to have high potential for AI automation.

Legal work faces a structurally similar challenge. Paralegals and document review specialists are among the most exposed professionals in the UK workforce. McKinsey estimates that 90 percent of legal research tasks can now be performed by AI. Clifford Chance, one of the world's leading law firms, has reduced its contract review time by 80 percent using AI tools. AI tools are expected to replace a significant portion of legal support roles, with paralegals facing an 80 percent risk of automation by 2026 and legal researchers a 65 percent risk by 2027. The partner giving client advice, arguing in court, or structuring a complex deal is far less exposed. The assistant doing the groundwork is in a genuinely precarious position.

Customer service is perhaps the most immediately visible disruption. Klarna's AI assistant handled the equivalent of 700 full-time employees' workloads within months of deployment. Progressive Insurance in the US now resolves 95 percent of claims under $10,000 without human involvement. Advanced conversational systems can handle up to 80 percent of first-level customer inquiries in banking and telecoms. Entry-level customer-facing roles are vanishing at a pace that the employment statistics are only beginning to capture.

Medium Exposure: Skilled Professionals Being Reshaped

This is where the picture gets more complex, and where most professional anxiety concentrates. Software development is perhaps the starkest example. In 2024, UK digital sector employment dropped for the first time in a decade. The number of 16 to 24-year-olds working in computer programming fell by 44 percent in a single year, according to UK government data. AI coding assistants like GitHub Copilot and Claude Code can now write, test, and debug significant portions of production code.

But senior engineers are not being replaced; they are being amplified. The work that remains human is the work that was always the hardest: architectural decisions, system design under constraints, debugging complex failures, understanding what a business actually needs and translating it into software. What's disappearing is the entry ramp. Junior developers who once learned by writing boilerplate code no longer have that apprenticeship. This is a serious structural problem for the profession's long-term talent pipeline, even if it doesn't immediately threaten established engineers.

Healthcare presents a paradox. Radiology and pathology are among the most technically exposed medical specialisms: AI systems can analyse scans with accuracy rates that rival or exceed trained clinicians in controlled conditions. Google DeepMind can detect over 50 eye conditions with 94 percent accuracy. PathAI identifies cancer with 99.5 percent precision. Yet overall healthcare employment is growing, not shrinking, because the demand for human care, for nurses, physiotherapists, general practitioners, social care workers, far outpaces what automation can absorb. The tasks change; the need for people does not.

Marketing and creative services sit at an interesting inflection point. A single strategist with access to AI tools can now produce what previously required a team: research, copy, design concepts, data analysis, campaign reporting. The implication is not that marketing departments disappear, but that they shrink while output expectations rise. The people who remain are those who can direct, edit, and quality-control AI output; those who cannot are increasingly redundant.

Lower Exposure: The Jobs AI Cannot Reach

The most counterintuitive finding in the research literature is this: the jobs least exposed to AI disruption are not the highest-paid or most educated. They are the most physical, the most relational, and the most context-dependent.

Electricians, plumbers, carpenters, construction workers, and skilled tradespeople face almost no near-term automation risk. Their work requires navigating unpredictable physical environments, adapting in real time to conditions that no training dataset can fully anticipate. Robotics is advancing rapidly, but the dexterity and judgement required to rewire a Victorian terrace house or diagnose a leaking boiler in an unusual configuration remains genuinely beyond current systems.

The same logic applies to care work, nursing, physiotherapy, social work, and teaching. These roles require continuous human presence, emotional attunement, and the ability to respond to another person's distress, confusion, or need in ways that are not reducible to pattern recognition. AI can assist these workers; it cannot replace the relational core of what they do.

The UK government's own labour market projections illustrate this clearly. By 2030, jobs directly involving AI activities could rise from 158,000 today to 3.9 million. The roles expected to grow most strongly include secondary education teachers, programmers and software development professionals, and financial managers, but only those who have integrated AI fluency into their practice. The projection is not for fewer people doing these jobs; it is for different people doing them differently.

3. What AI Cannot Do: The Enduring Human Advantages

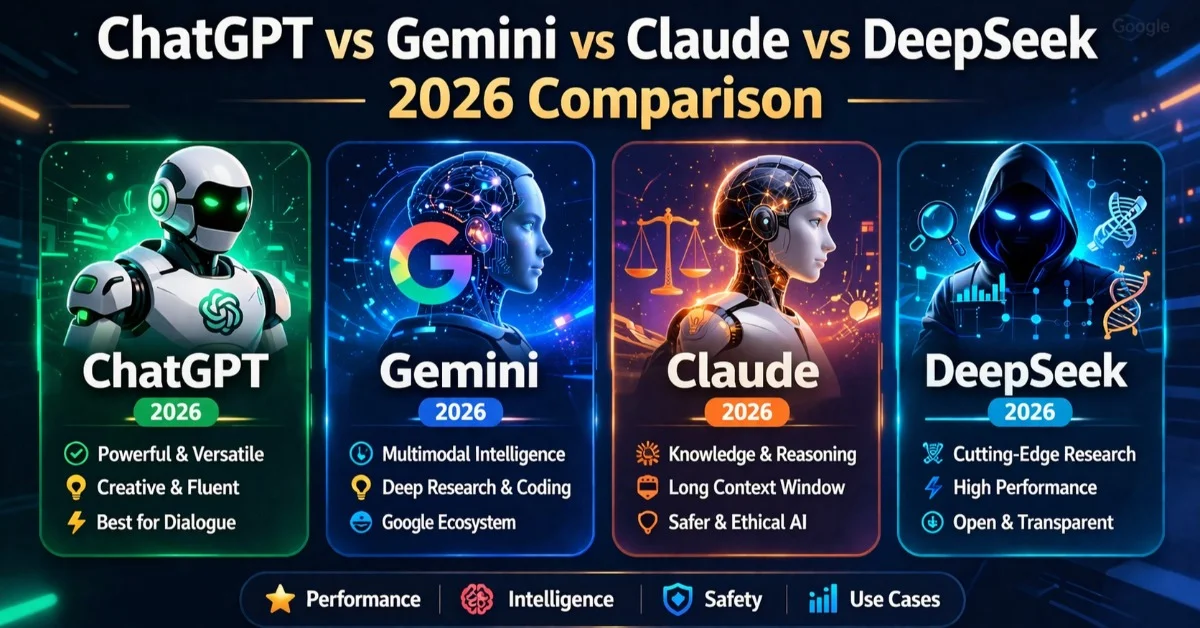

Every serious analysis of AI's capabilities eventually arrives at the same boundary. AI systems are extraordinarily good at processing information, recognising patterns, generating plausible outputs, and optimising for defined objectives within a bounded domain. They are genuinely poor, at least for now, at several things that turn out to be central to much of what makes professional work valuable.

Genuine Relational Intelligence

There is a meaningful difference between an AI that produces empathetic-sounding language and a human being who genuinely understands what another person is going through. When a client's business is failing, when a patient has just received a serious diagnosis, when an employee is struggling in ways they cannot articulate; these moments require not just the right words but the right presence. The most sophisticated language models can approximate the words. They cannot provide the presence. And in many professional contexts, the presence is what clients, patients, and colleagues are paying for.

Judgment Under Genuine Uncertainty

AI systems optimise within defined parameters. Real professional judgment often involves deciding what the parameters should be in the first place, when to break the rules, when the situation is genuinely novel, and when the data you have is insufficient to support any confident conclusion. The barrister deciding how to read a jury, the fund manager deciding whether a geopolitical signal matters, the surgeon deciding whether to operate in an ambiguous case; these decisions involve a kind of contextual reasoning that current AI systems struggle with precisely because they cannot represent what they do not know.

Original Creative Vision

Generative AI is remarkably good at producing output that resembles existing creative work. It is far less capable of identifying that a creative category needs to be broken entirely, of making the conceptual leap that defines a new artistic movement, a transformative product category, or a genuinely new strategic direction. The people who do that work, who can look at what exists and imagine something that does not yet, retain an advantage that is not easily eroded by pattern-matching systems.

Moral Accountability

When an AI-assisted medical diagnosis is wrong, a human clinician is accountable. When an AI-drafted legal document contains an error that damages a client, a human lawyer bears responsibility. When an AI trading system makes decisions that harm investors, a human professional is answerable to regulators, clients, and courts. The irreducibility of human accountability is not a limitation of current AI systems that will be engineered away; it reflects something deeper about how responsibility functions in societies built on trust.

4. The Skills Premium: Why AI is Making Some Workers More Valuable

One of the most significant findings from 2025 and 2026 labour market data concerns the wage effects of AI adoption. The pattern is not, as many feared, uniform downward pressure on salaries. It is a growing divergence.

PwC's 2025 Global AI Jobs Barometer, which analysed job postings across major economies, found a 56 percent wage premium for roles that explicitly require AI skills compared with similar roles without that requirement. That is not a marginal uplift; it is a structural shift in what different workers are worth to employers. AI skills are commanding salary differentials comparable to the premium once associated with advanced degrees.

Research from the World Economic Forum reinforces this picture. AI skills now appear to act as a partial equaliser in hiring, helping older workers and those without advanced degrees compete more effectively for roles. When AI skills are supported by a recognised certificate, the effect is even stronger. In a labour market where credentials have long been a proxy for capability, demonstrable AI fluency is proving to be a more direct signal.

The UK's AI market is currently valued at £72.3 billion and is the largest AI market in Europe, according to UK government figures. AI-related job postings have climbed to 127 percent above pre-pandemic levels even as overall postings remain depressed. The message from employers is consistent: we need fewer people to do the same work, but the people we do need must be able to work effectively with AI systems. The workers who have made that transition are doing better than ever. Those who have not are increasingly competing for a shrinking pool of roles.

5. A Practical Framework: Five Things to Do Before the End of 2026

Understanding the landscape is necessary but not sufficient. The following steps are grounded in what the data actually shows distinguishes workers who are navigating this transition well from those who are not.

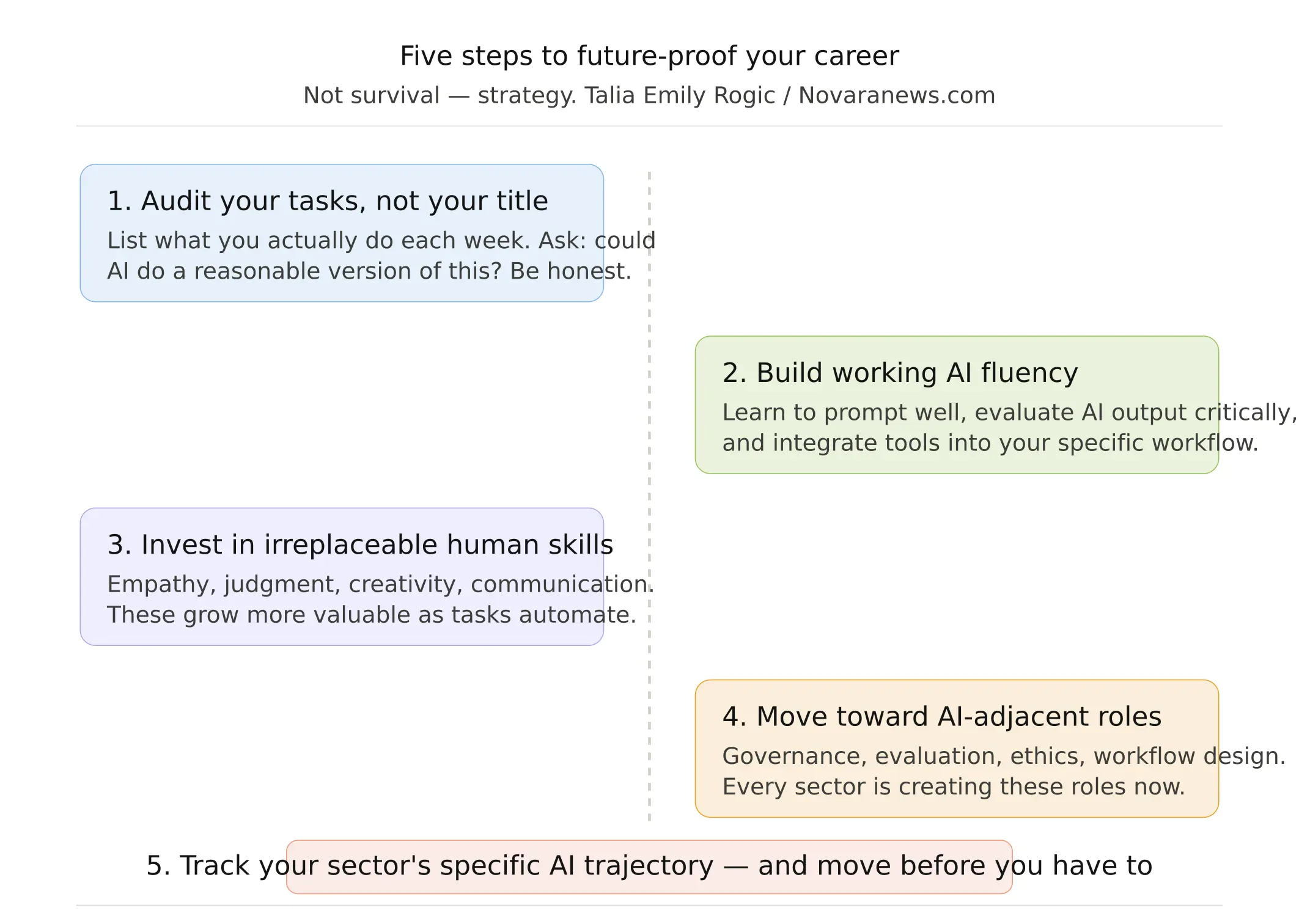

Step 1: Audit Your Tasks, Not Your Job Title

Sit down and write out, honestly and specifically, what you actually do each week. Not your job description; your actual activities. Then ask, for each one: could a capable AI system do a reasonable version of this with access to the right information? If more than half of your tasks fall into that category, you are more exposed than you may have realised. If fewer than a quarter do, you are in a more robust position. This audit is not designed to frighten you; it is designed to tell you where to invest your energy.

Step 2: Develop Working AI Fluency

You do not need to become a machine learning engineer. You do need to be able to use AI tools effectively in the context of your specific work. That means understanding how to frame prompts that produce genuinely useful outputs, how to evaluate AI-generated content critically, how to identify the failure modes relevant to your field, and how to integrate AI tools into workflows in ways that demonstrably improve your output. This is a learnable skill set, and it is learnable quickly. PwC's data suggests it is also one of the highest-return investments currently available in the labour market.

Step 3: Invest Heavily in Irreplaceable Human Skills

The WEF's Future of Jobs Report identifies the fastest-growing skill categories for 2025 to 2030: creative thinking, resilience and adaptability, systems thinking, and empathy and active listening. These are not soft skills in the dismissive sense; they are the capabilities that remain genuinely hard to automate and that become more valuable as the tasks around them are automated away. A financial advisor who can build trust, read a client's actual concerns beneath their stated ones, and communicate complexity with clarity is more valuable in an AI-saturated market, not less.

Step 4: Reposition Yourself Toward AI-Adjacent Roles

Every sector that is being disrupted by AI is simultaneously creating new roles to manage, direct, audit, and improve its AI systems. Prompt engineers, AI governance specialists, model evaluators, AI ethics officers, human-AI workflow designers; these are not niche technical roles. They are emerging across finance, healthcare, legal services, education, and the public sector. The common thread is not deep technical expertise but a combination of domain knowledge, critical thinking, and a willingness to engage seriously with how AI systems actually work and fail.

Step 5: Take the Long View on Your Sector's Trajectory

The current disruption is uneven in its pace. Some sectors are moving fast; others are moving slowly. Understanding the specific trajectory of your own field, which tasks are being automated first, what the regulatory environment looks like, how your competitors are deploying AI, gives you meaningful lead time to adapt before you are reacting rather than planning. Trade publications, sector-specific research from bodies like the Office for National Statistics or the Bureau of Labor Statistics, and even LinkedIn's own skills data can give you a clearer picture than the generalised media coverage.

The Real Question Is Not About Survival

When I replied to my paralegal friend, I told her something that I believe the data supports: the legal profession is not going away. What is going away is the version of entry-level legal work that involved spending three years doing document review before you were trusted with anything interesting. AI is compressing that apprenticeship in ways that are genuinely disruptive to how young lawyers learn and develop. But the work that experienced lawyers do, the judgment, the advocacy, the navigation of ambiguity, the management of clients under pressure, none of that is being automated. It is, if anything, becoming more central to what law firms are selling.

The same logic applies, with variations, across most of the professional landscape. The disruption is real. The anxiety is understandable. But the question worth asking is not whether AI will take your job. It is whether the version of your job that requires the most of you, the version that drew you to the field in the first place, the version that cannot be reduced to a sequence of retrievable tasks, is the version you are spending most of your time on.

For most people in most fields, the honest answer right now is no. And that is where the real opportunity is. Not in resisting the technology, but in using it to clear away the parts of your work that were never the point, so that you can spend more time on the parts that are.

The workers who will look back on 2026 as a turning point in their careers are not the ones who panicked, and not the ones who ignored what was happening. They are the ones who looked clearly at what was changing, made deliberate choices about where to invest their time and attention, and moved before they had to.