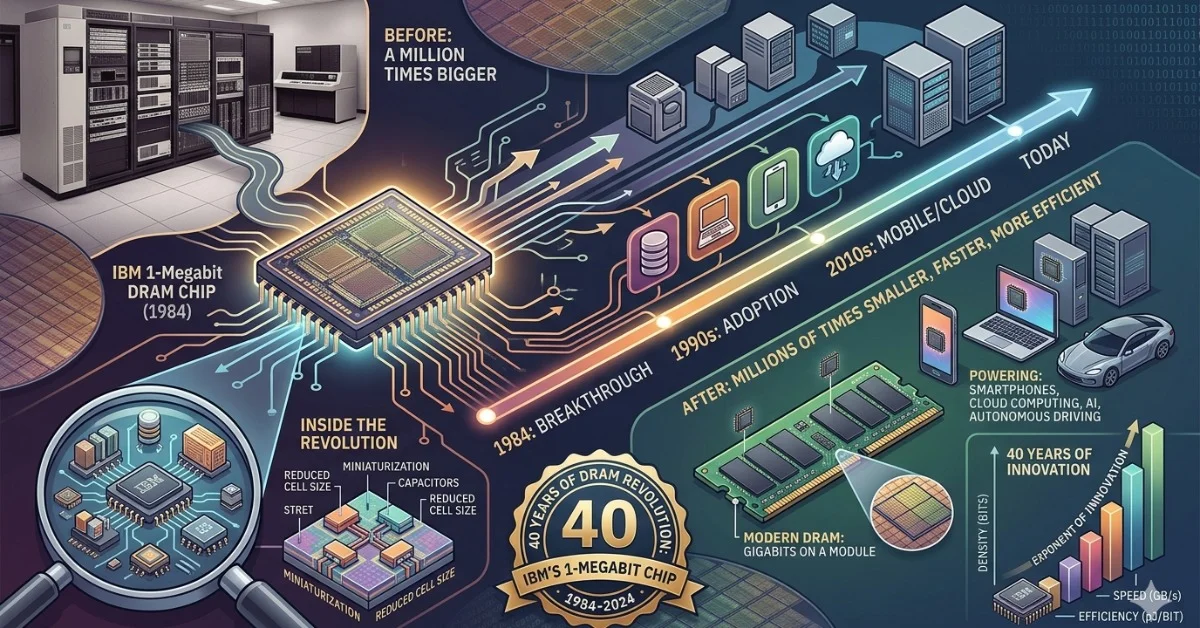

Forty years ago this month, IBM announced it had developed a 1-megabit dynamic random-access memory chip — a milestone that represented a roughly 16-fold increase in storage density over the 64-kilobit DRAM chips that were the industry standard at the time. The April 1986 announcement placed IBM at the leading edge of semiconductor memory development and helped set the trajectory for the memory scaling that would define personal computing through the following decade.

What the 1-Megabit Threshold Actually Meant

In the mid-1980s, 64-kilobit DRAM chips were the workhorses of computer memory. Reaching 1 megabit on a single chip was not a modest incremental gain — it required advances in lithography, cell design, and manufacturing process control that most chip makers had not yet achieved at production scale. IBM's research laboratories had been pushing transistor density boundaries throughout the early 1980s, and the megabit DRAM represented a practical demonstration that those process advances could translate into a shippable component.

The significance extended beyond raw capacity. Higher-density memory chips allowed system designers to build machines with more usable RAM in the same physical footprint, reduce power consumption per bit stored, and lower the cost per kilobyte over time as yields improved. Each of those factors compounded through the late 1980s and into the 1990s as the PC market expanded rapidly.

IBM's Position in Semiconductor Research at the Time

IBM in 1986 operated one of the most heavily funded corporate research programs in the United States. Its Thomas J. Watson Research Center in Yorktown Heights, New York, and its semiconductor manufacturing facilities gave the company an unusual ability to move from laboratory process development to physical chip production without depending on outside foundries. That vertical integration was central to how IBM was able to demonstrate the megabit chip ahead of most competitors.

Japanese manufacturers, particularly Hitachi, NEC, and Fujitsu, were also aggressively pursuing megabit DRAM during the same period and would go on to dominate merchant DRAM production through the late 1980s. IBM's announcement came in the context of an intensifying US-Japan competition in semiconductor memory that would eventually reshape the entire industry, contributing to the 1986 US-Japan Semiconductor Trade Agreement signed the same year.

A Benchmark That Defined an Era

The 1-megabit boundary has since become a reference point in the history of memory scaling — the moment the industry crossed from kilobit-class to megabit-class chips in commercial relevance. Subsequent generations moved to 4-megabit, 16-megabit, and eventually gigabit-class DRAM within roughly two decades, each transition following a similar pattern of laboratory demonstration followed by manufacturing scale-up and rapid price decline.

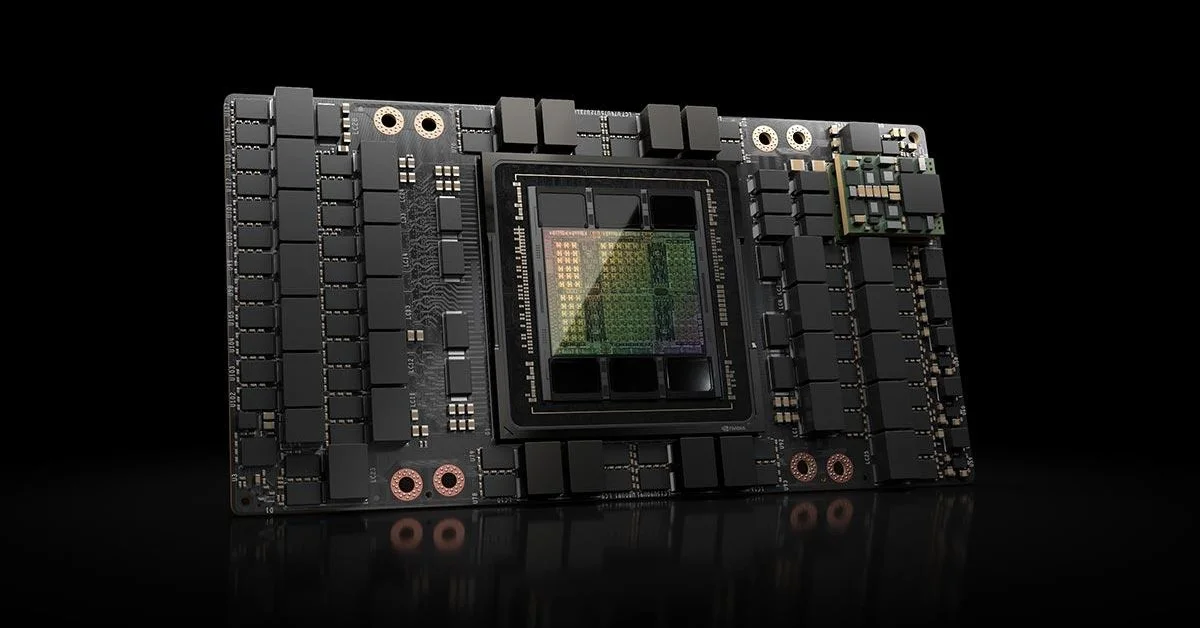

Modern DRAM modules now carry capacities measured in gigabytes per chip, making the 1986 milestone look modest in raw numbers. What it represented, however, was the proof that memory density could scale aggressively — a premise the industry has tested and confirmed repeatedly in the four decades since. Current research into next-generation memory architectures, including high-bandwidth memory stacks and emerging non-volatile technologies, is in many ways a continuation of the same engineering ambition IBM demonstrated in the spring of 1986.