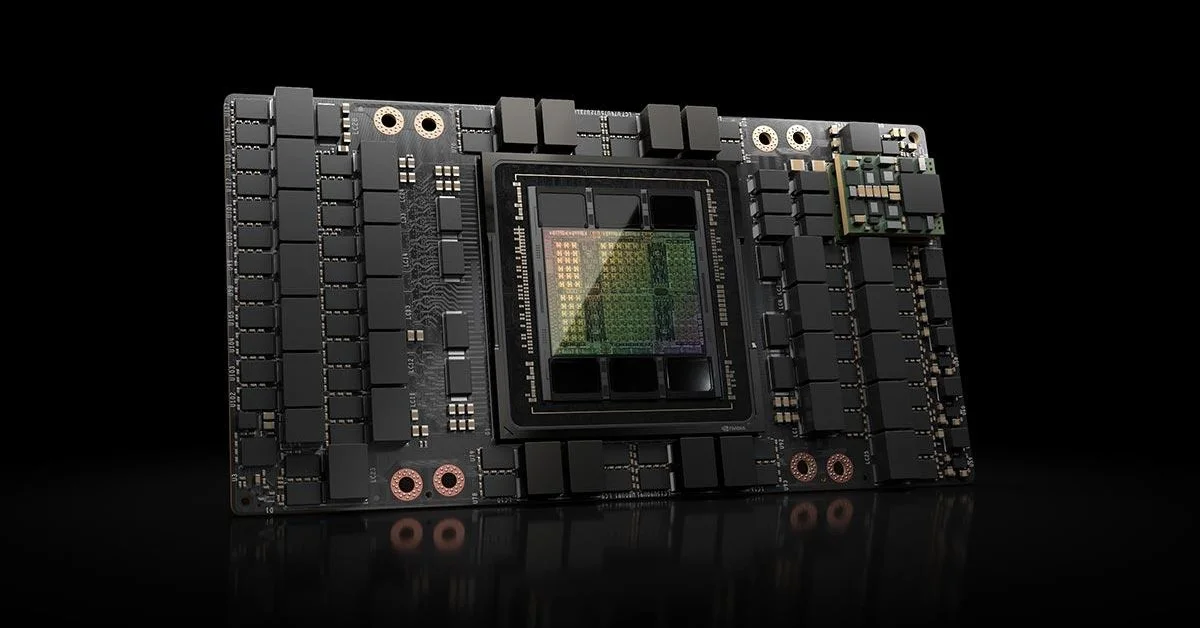

The global race to build advanced artificial intelligence systems is reshaping the hardware industry, and Nvidia is positioning itself at the center of that transformation. In 2026, the company is expanding the rollout of its next-generation Blackwell GPU architecture, aiming to meet rapidly growing demand from cloud providers, enterprises, and AI developers.

According to company statements and industry sources, Blackwell is designed to deliver significant performance improvements over previous generations, particularly in large-scale AI training and inference workloads. Analysts note that demand for high-performance GPUs has continued to outpace supply, driven by the widespread adoption of generative AI technologies.

Blackwell architecture targets AI at scale

Nvidia’s Blackwell platform represents a shift toward more specialized AI hardware. The architecture is optimized for handling massive datasets and complex model training, which are becoming standard requirements for modern AI systems.

Industry analysts suggest that Blackwell GPUs are engineered to improve efficiency in both energy consumption and computational output. This balance is increasingly important as data centers face rising operational costs and sustainability pressures.

In addition, the architecture is expected to support more advanced interconnect technologies, enabling faster communication between GPUs. This capability is critical for scaling AI workloads across multiple nodes in large data center environments.

Cloud providers drive demand

Major cloud providers are among the primary drivers behind the surge in GPU demand. Companies offering AI services are investing heavily in infrastructure to support enterprise clients and developers building large language models and other AI applications.

According to sector sources, hyperscale data centers are rapidly expanding their GPU capacity, often securing long-term supply agreements with hardware manufacturers. This trend reflects the strategic importance of AI infrastructure in maintaining competitive advantage.

At the same time, enterprises outside the traditional tech sector are increasingly adopting AI solutions, further contributing to demand. Industries such as finance, healthcare, and manufacturing are deploying AI systems that require robust hardware support.

Supply constraints remain a challenge

Despite increased production efforts, supply constraints continue to shape the market. Analysts indicate that advanced semiconductor manufacturing processes and limited fabrication capacity are key bottlenecks.

Nvidia has been working closely with manufacturing partners to scale production, but demand remains exceptionally high. This imbalance has led to longer lead times and increased costs for AI hardware.

Industry observers point out that competition for GPU resources is intensifying, particularly among companies developing large-scale AI models. Access to high-performance hardware is becoming a critical factor in determining which organizations can compete effectively in the AI space.

Competition intensifies across the hardware market

While Nvidia remains a dominant player, competition is growing. Other semiconductor companies are developing alternative AI accelerators aimed at challenging Nvidia’s position.

Analysts note that the market is shifting toward a more diversified ecosystem, where different types of hardware solutions coexist. This includes custom chips designed for specific workloads, as well as general-purpose GPUs optimized for flexibility.

However, Nvidia’s advantage lies in its established software ecosystem, including development tools and frameworks that are widely adopted across the industry. This integration between hardware and software continues to strengthen its position.

AI infrastructure becomes a strategic priority

The rapid expansion of AI capabilities is turning hardware infrastructure into a strategic priority for companies worldwide. Investments in data centers, specialized chips, and energy-efficient systems are increasing at a significant pace.

Experts suggest that the evolution of AI hardware will play a decisive role in shaping the next phase of technological innovation. As models become more complex, the need for powerful and efficient hardware will only grow.

At the same time, concerns around energy consumption and environmental impact are prompting companies to explore more sustainable solutions. This includes optimizing hardware design and improving data center efficiency.

The ongoing rollout of Nvidia’s Blackwell GPUs highlights how central hardware has become in the broader AI ecosystem, as companies continue to compete for performance, scalability, and efficiency.