A severe and potentially long-lasting shortage of DRAM memory chips is taking shape across the global semiconductor industry, driven primarily by surging demand from artificial intelligence infrastructure. According to reporting by Nikkei Asia, even as the world's largest memory manufacturers accelerate production capacity, suppliers are expected to meet only around 60 percent of total market demand by the end of 2027 — a gap that analysts and senior executives warn could widen before it closes.

SK Hynix Chairman Signals Shortage Could Run to 2030

Among the most striking signals of the supply crisis is a statement attributed to the chairman of SK Group — the parent conglomerate of SK Hynix, the world's second-largest memory chipmaker — suggesting that shortages could persist until 2030. That timeline, if accurate, would represent an extraordinary multi-year constraint on an industry already under strain from the rapid buildout of data centers and AI accelerator systems. SK Hynix, alongside Samsung and Micron Technology, the three dominant global DRAM producers, is reported to be actively expanding production capacity, though the scale and speed of those investments have not yet been confirmed as sufficient to close the projected gap within any near-term window.

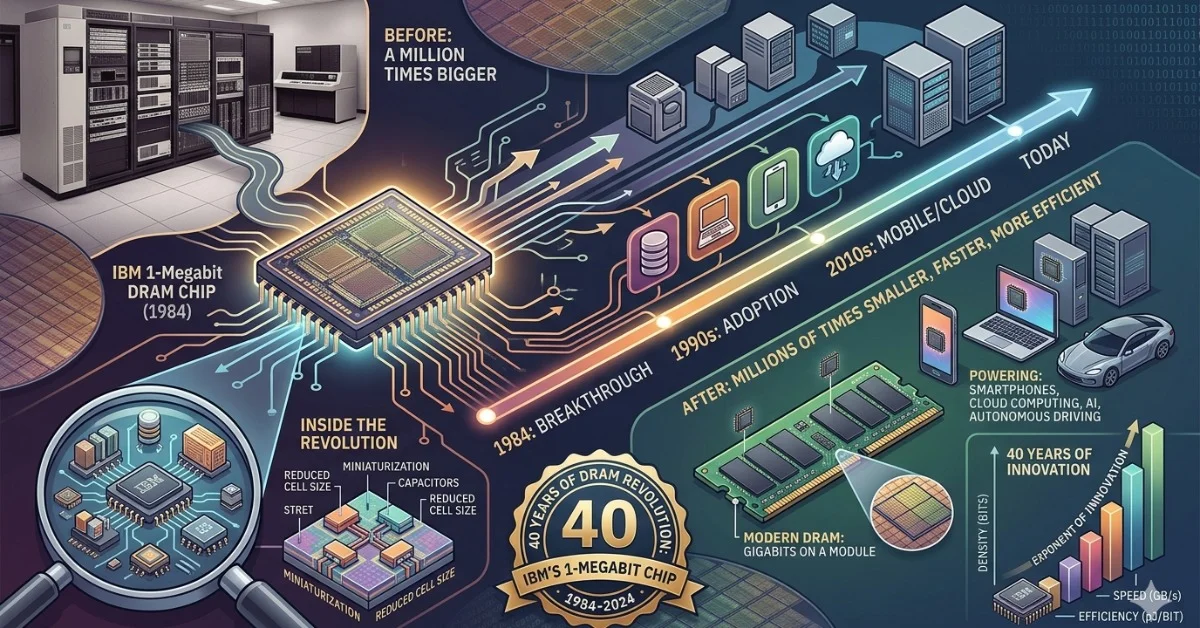

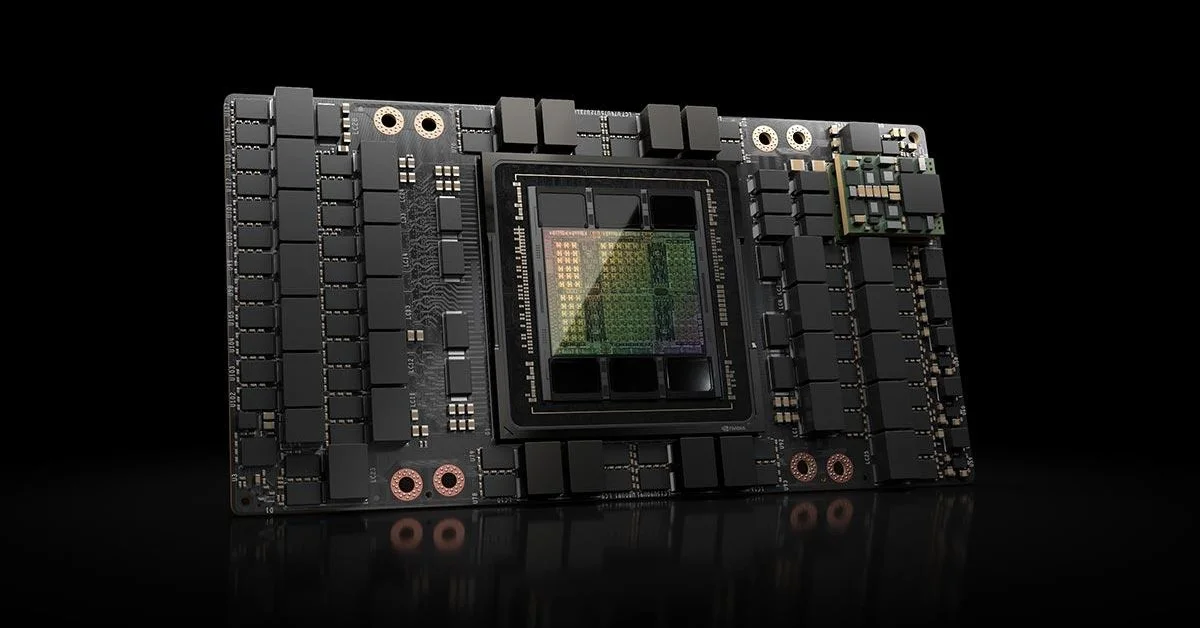

The pressure point is not conventional consumer demand for laptops or smartphones, which has historically driven DRAM market cycles. The newer and more structurally disruptive force is high-bandwidth memory, or HBM — a specialized form of stacked DRAM designed to sit directly alongside AI processors and handle the massive data throughput that large language model training and inference require. HBM production is substantially more complex and time-consuming than standard DRAM, and Samsung, SK Hynix, and Micron are all competing to secure long-term supply agreements with major AI chip designers, most prominently Nvidia.

Why Standard Capacity Expansion Cannot Quickly Fix the Problem

Building new semiconductor fabrication capacity is one of the most capital-intensive and time-delayed processes in modern industry. A new memory fab typically requires three to four years from groundbreaking to volume production, and even expansions of existing facilities take well over a year to yield meaningful output. That structural lag explains why the gap between supply and demand can persist even when investment commitments are announced publicly and appear substantial on paper.

The situation is compounded by the concentration of advanced HBM production. SK Hynix has been widely reported as the leading supplier of HBM3E — the current generation used in Nvidia's H100 and H200 series accelerators — while Samsung has faced yield challenges in qualifying its own HBM3E output with Nvidia, according to multiple technology trade publications. Micron, the only US-based major DRAM producer, has been ramping its own HBM3E capacity and secured Nvidia qualification, but its overall share of the HBM market remains smaller than either of its Korean competitors.

The broader implication is that the memory bottleneck is now a constraint not just on chip pricing but on the pace at which AI infrastructure can be physically deployed. Cloud providers, hyperscalers, and national AI programs are all competing for a constrained pool of high-bandwidth memory — a dynamic that is beginning to influence procurement strategies, data center timelines, and even national semiconductor policy in the United States, South Korea, Japan, and Europe. Whether supply catches demand before the end of the decade remains genuinely open, and the gap between ambition and production reality is, for now, the defining tension in the global memory market.