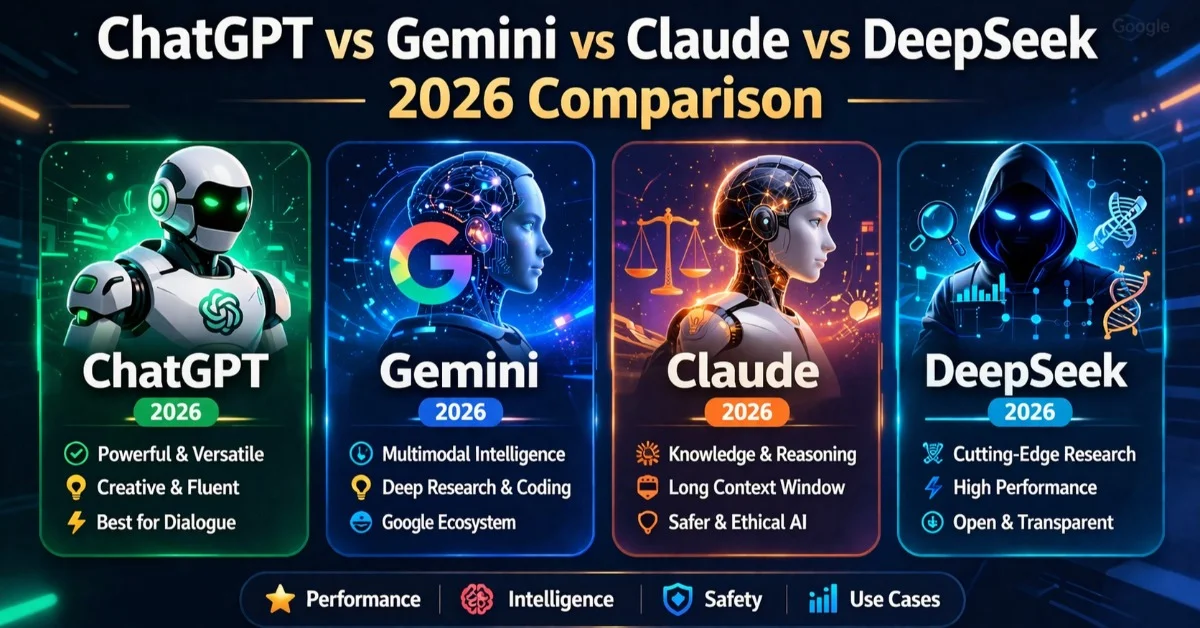

Why This Comparison Matters Now

Last Tuesday, I watched a freelance copywriter friend of mine paste the same product brief into four different AI tools, one after the other, trying to find which one could write a decent landing page for a German SaaS client. ChatGPT gave her something slick but hollow. Gemini produced a draft that read like a Wikipedia summary wearing a blazer. Claude nailed the tone but kept running into usage limits on the free tier. DeepSeek wrote surprisingly competent copy, and she spent the next twenty minutes wondering whether her client's product data was now sitting on a server in Hangzhou. That single afternoon captures exactly why a comparison like this matters in April 2026: these four tools are closer in raw capability than ever before, but the differences in how they behave, what they cost, and what they do with your data have never been more consequential.

The market has shifted in the first quarter of 2026 alone. OpenAI launched GPT-5.4 on March 5, rolling native computer use into a single unified model. Anthropic released Claude Opus 4.6 and Sonnet 4.6 in February, slashing API prices by two-thirds compared to the Opus 4.1 generation. Google pushed out Gemini 3.1 Pro with a 2-million-token context window and a new Ultra subscription tier. DeepSeek shipped V4 with a trillion-parameter architecture and benchmark claims that have not yet been independently verified. Every one of these companies wants to be your default thinking tool. None of them deserves to be your default without scrutiny.

Writing and Content Creation

If your primary use for an AI assistant is writing, the choice in 2026 is more nuanced than most comparison articles will admit. Claude Opus 4.6 remains the strongest writer among the four. It produces prose with a natural cadence that requires less editing. It follows stylistic instructions more faithfully, maintains voice consistency across long documents, and shows a kind of restraint that the others lack. Where GPT-5.4 tends to overwrite and Gemini tends to flatten, Claude will match tone and register with a precision that feels almost eerie. For professional writers, editors, and content teams, this matters more than any benchmark number.

GPT-5.4 is a close second, and for certain writing tasks it actually pulls ahead. It excels at structured content: product descriptions, technical documentation, marketing emails with clear CTAs. Its strength is breadth. It can shift between a formal white paper and a breezy Instagram caption faster than Claude, and the output is reliably polished even if it sometimes reads as a little too polished. OpenAI's Thinking mode, available on Plus and above, adds a reasoning layer that improves factual accuracy in long-form content, which is a genuine advantage for research-heavy articles.

Gemini 3.1 Pro is the weakest pure writer of the four, but it has a significant compensating advantage: its 2-million-token context window means you can feed it an entire book manuscript, a full legal brief, or six months of meeting transcripts and get coherent summaries. For tasks where comprehension of a massive body of text matters more than the elegance of the output, Gemini wins by default because the others simply cannot hold that much context at once. Its Workspace integration also makes it the most frictionless option for people who live inside Google Docs, Gmail, and Sheets.

DeepSeek V3.2 writes competently in English, producing clean, functional copy that rarely embarrasses itself. But its outputs have a flatness that experienced editors notice immediately. The model lacks the subtle shifts in sentence rhythm that make Claude and GPT-5.4 feel more human. It also shows noticeable censorship patterns around politically sensitive topics, even in contexts where the sensitivity has nothing to do with Chinese politics. For creative writing, fiction, or anything requiring genuine voice, DeepSeek is the weakest of the four.

Winner: Claude Opus 4.6 for quality and tone. GPT-5.4 for speed and versatility. Gemini for anything that requires processing enormous context.

Coding and Technical Tasks

The Benchmark Picture

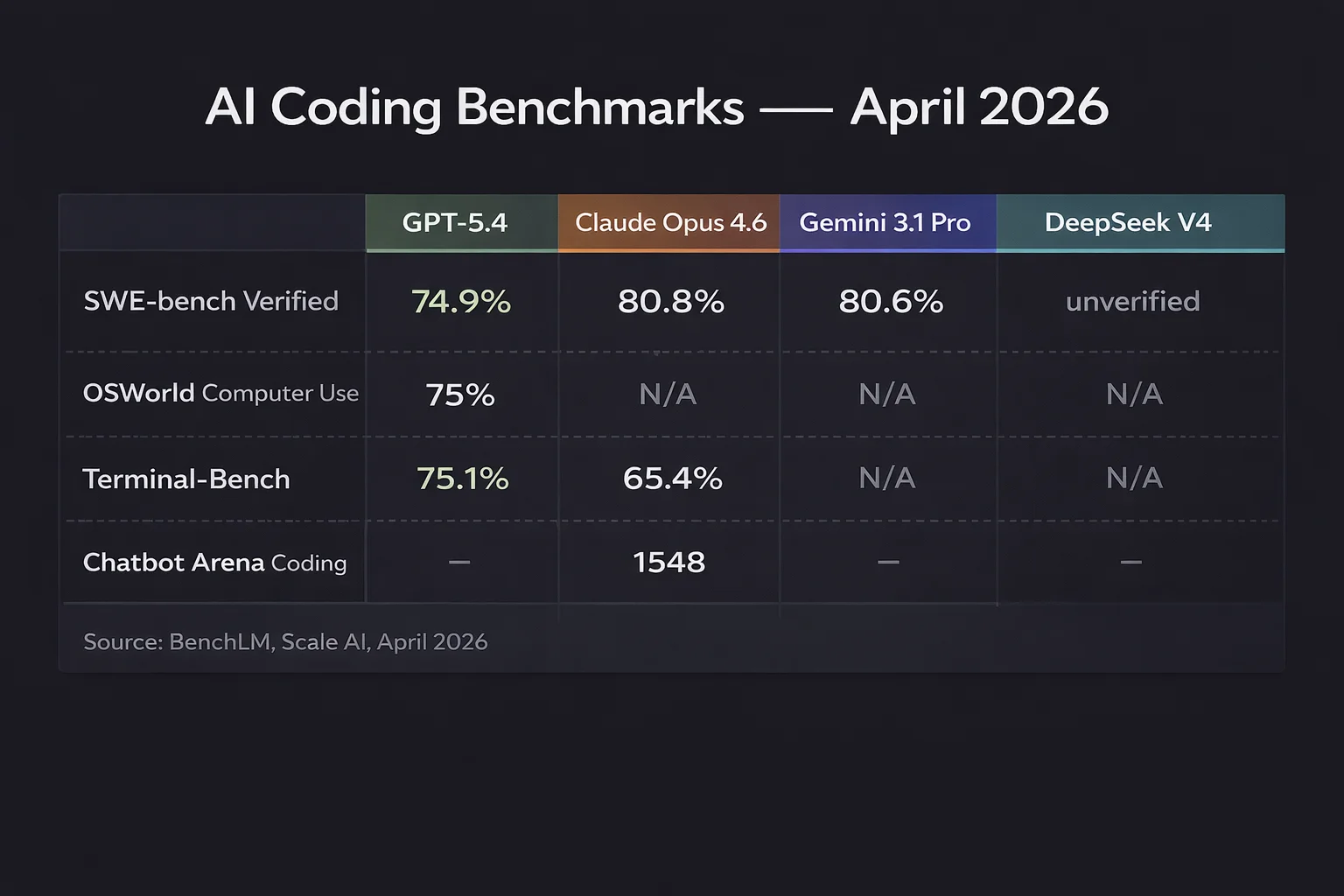

The coding benchmarks in 2026 tell a story of convergence at the top. On SWE-bench Verified, which tests the ability to resolve real GitHub issues, six models now score within roughly one percentage point of each other. Claude Opus 4.6 leads at 80.8%, followed closely by Gemini 3.1 Pro at 80.6% and the open-weight MiniMax M2.5 at 80.2%. GPT-5.4 sits right in that cluster as well, with the exact ranking depending on the evaluation scaffold used. On SWE-bench Pro, the harder multilingual variant run by Scale AI with a standardized harness, GPT-5.4 pulls ahead at 57.7% compared to roughly 46% for Claude Opus 4.6. DeepSeek V4 claims 81% on SWE-bench Verified in leaked benchmarks, but those numbers have not been independently confirmed as of this writing.

Beyond the headline benchmarks, the practical differences emerge more clearly. GPT-5.4 scores 75% on OSWorld for computer use tasks, surpassing the human expert baseline of 72.4%. No other model has crossed that threshold. On Terminal-Bench, which tests live terminal operations like system administration and CI/CD debugging, GPT-5.4 leads at 75.1% compared to Claude Opus 4.6 at 65.4%. Claude, meanwhile, leads the Chatbot Arena coding Elo at 1548, which reflects developer preference in side-by-side comparisons rather than automated pass/fail metrics.

What This Means in Practice

If you write code every day, Claude Opus 4.6 and Sonnet 4.6 remain the most popular choices among professional developers for good reason. Claude understands ambiguous prompts better. It produces code with clearer documentation and more readable structure. It handles complex refactoring tasks with fewer missteps, and Anthropic's Claude Code terminal tool has become a genuine productivity multiplier for many engineering teams. GPT-5.4 is the better choice for DevOps-heavy workflows, infrastructure-as-code, terminal operations, and scenarios where computer use integration matters. Gemini 3.1 Pro is the pragmatic budget pick for coding, matching Opus-tier performance at $2/$12 per million tokens instead of $5/$25. DeepSeek V3.2 offers functional coding assistance at a fraction of the cost, scoring around 60% on SWE-bench Verified, but its API reliability remains a real concern, with frequent 503 errors during peak hours in Beijing time.

Winner: Claude Opus 4.6 for developer experience and code quality. GPT-5.4 for terminal/DevOps tasks and computer use. Gemini 3.1 Pro for the best performance-per-dollar ratio.

Reasoning and Complex Analysis

This is where the four models diverge most sharply, and where benchmark numbers tell you the most about real-world performance. On GPQA Diamond, a test of PhD-level scientific reasoning in biology, chemistry, and physics, Gemini 3.1 Pro leads the pack at 94.3%. Claude Opus 4.6 follows at 91.3% (with some independent evaluations placing it at 87.4% depending on the test harness). GPT-5.4 trails behind in this specific category. On Humanity's Last Exam, the most difficult reasoning benchmark currently in use, Grok 4 from xAI leads at 50.7%, but among our four contenders, GPT-5.4 and Claude Opus 4.6 are broadly competitive.

Traditional benchmarks like MMLU have become nearly useless at the frontier. GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro all score above 87%, and the differences are within noise. MMLU-Pro, a harder variant with ten answer choices, still shows some separation, but the practical takeaway is that all three Western models are roughly equivalent on broad knowledge tasks. DeepSeek V3.2 and V4 perform well on mathematical reasoning and standardized tests but show weakness on tasks requiring cultural nuance, open-ended analysis, or multi-step inference across domains.

In practical terms, Claude excels at the kind of careful, multi-step reasoning that matters in legal analysis, academic research, and complex strategic thinking. Its extended thinking mode produces notably more rigorous chains of logic. GPT-5.4's reasoning strength is more structured and systematic, making it better for financial modeling, data analysis, and tasks where the answer should follow a deterministic path. Gemini's reasoning is strongest when combined with its massive context window: give it an enormous dataset and ask it to find patterns, and it performs superbly. DeepSeek R1 was impressive when it launched, but the reasoning space has moved on, and its successors have not kept pace with the Western frontier models.

Winner: Gemini 3.1 Pro on scientific reasoning benchmarks. Claude Opus 4.6 for nuanced, open-ended analysis. GPT-5.4 for structured, data-driven reasoning.

Multimodal and Image Capabilities

The multimodal gap has narrowed in 2026, but it has not closed. GPT-5.4 offers the most complete multimodal package: native image generation through DALL-E, image understanding built into the core model, and video generation through Sora (though Sora's future at OpenAI appears uncertain, with reports of the product line being discontinued). GPT-5.4 is also the only model with native computer use at the frontier level, meaning it can interact with on-screen interfaces, click buttons, fill forms, and navigate applications. This is a genuinely new category of capability that the other three do not match.

Gemini 3.1 Pro is the strongest on multimodal input. Its ability to process text, images, audio, and video within a single conversation, combined with its 2-million-token context window, makes it the best choice for tasks like analyzing a collection of photos, transcribing and summarizing video content, or working with mixed-media documents. Google's Imagen 4 and Veo 3.1 models offer strong image and video generation, accessible within the Gemini ecosystem. For anyone working in media, marketing, or content production, the integration of these generation tools into the same subscription is a real advantage.

Claude Opus 4.6 understands images well and can analyze screenshots, diagrams, and documents with strong accuracy. However, Anthropic does not offer native image generation. Claude can create SVG graphics and HTML visualizations, which covers some use cases, but it cannot produce photorealistic images or illustrations. This is a deliberate product choice by Anthropic, not a technical limitation, and it means Claude users who need image generation must pair it with another tool.

DeepSeek V4 claims native multimodal generation including text, image, and video, but the full model has not been publicly released in a stable form as of April 2026. The V3.2 model available through the API has limited multimodal capabilities compared to the other three. DeepSeek's strengths lie squarely in text and code, not in visual understanding or generation.

Winner: GPT-5.4 for the most complete multimodal suite. Gemini 3.1 Pro for multimodal input and context length. Claude and DeepSeek lag behind here.

Pricing: Free Tiers, Paid Plans, and API Costs

Consumer Subscriptions

OpenAI now runs six tiers. The free plan gives you access to GPT-5.3 with tight limits and, since February 2026, includes ads in the United States. ChatGPT Go costs $8/month and adds more messages but still includes ads and lacks advanced features like Deep Research, Codex, and Agent Mode. ChatGPT Plus remains $20/month, unchanged for three years, and is the clear sweet spot: full GPT-5.4 Thinking access, Codex, Sora, DALL-E, 10 Deep Research runs per month, and no ads. ChatGPT Pro at $200/month targets heavy users who need GPT-5.4 Pro mode with extended reasoning and near-unlimited generation. OpenAI recently announced a new $100/month Pro tier as a middle option, offering 5x more Codex than Plus.

Anthropic offers a free tier with access to Claude 4.6 but with strict daily limits that can be exhausted within an hour during peak demand. Claude Pro costs $20/month and provides roughly 5x the free usage, Claude Code terminal access, file creation, code execution, and Google Workspace integration. Claude Max runs at $100/month for the 5x tier and $200/month for the 20x tier, aimed at developers and power users who need all-day access.

Google structures Gemini access through Google One. The free tier includes Gemini 2.5 Flash with rate limits. Google AI Pro at $19.99/month adds Gemini 3 access, Deep Research, Veo 3.1 video generation, 1,000 AI credits, and integration across Gmail, Docs, and other Workspace apps. The plan also includes 2TB of Google Drive storage, which effectively reduces the AI-specific cost to about $10/month if you already need cloud storage. Google AI Ultra at roughly $42/month (billed quarterly at $124.99) unlocks Gemini 3.1 Pro, 25,000 AI credits, and YouTube Premium.

DeepSeek is free for web and app use, with no subscription required. The chatbot runs the V3.2 model and does not charge consumers. This is its most disruptive feature. For casual users who want competent AI assistance without paying anything, DeepSeek is hard to argue with, assuming you accept the privacy tradeoffs.

API Pricing

For developers, the pricing picture is more complex. GPT-5.4 costs $2.50 per million input tokens and $15 per million output tokens. Claude Opus 4.6 runs $5 input and $25 output per million tokens, while Sonnet 4.6 sits at $3/$15 and Haiku 4.5 at $1/$5. Gemini 3.1 Pro is priced at $2/$12 per million tokens. DeepSeek V4 costs just $0.30/$0.50, and V3.2 is even cheaper at $0.28/$0.42, with cache hits dropping to $0.028. The cost gap is staggering: DeepSeek V4 costs roughly one-tenth what GPT-5.4 charges and one-fiftieth what Claude Opus 4.6 charges for the same number of tokens.

All four providers offer batch processing at 50% discounts and various forms of prompt caching that can reduce costs by up to 90% for repeated context. Google also maintains the most generous free API tier through AI Studio, offering free access to Flash models with rate limits.

Winner: DeepSeek on raw cost. Gemini for the best value subscription when you factor in storage. ChatGPT Plus and Claude Pro are both strong at $20/month, with Plus offering more features and Claude offering better quality per interaction.

Privacy and Data Policies

This section matters more than most reviewers give it credit for. If you use AI for anything involving client data, proprietary information, personal details, or sensitive business strategy, the privacy terms are not optional reading.

OpenAI's free and consumer plans (Free, Go, Plus, Pro) use your conversations to train models by default, though you can opt out in settings. Business and Enterprise plans do not use your data for training, and this is a firm contractual commitment. OpenAI is SOC 2 Type 2 compliant, supports SAML SSO on business tiers, and stores data on U.S. servers.

Anthropic's consumer plans also operate on an opt-out model for training data. You need to actively disable the setting. Team and Enterprise plans do not use your data for training by default. Anthropic stores data in the United States. The Enterprise plan includes HIPAA BAA, DPA, audit logs, and compliance APIs for regulated industries. Anthropic has generally been more transparent about its data practices and has positioned Constitutional AI safety as a core differentiator.

Google's Workspace plans have the most complex data story because Gemini is integrated across so many Google products. On paid Workspace tiers, Google states that it does not use your data for model training. The consumer Gemini app operates under Google's standard privacy terms, which are more permissive. Google is subject to both U.S. and EU regulatory oversight and maintains extensive compliance certifications.

DeepSeek is in a category by itself here, and not in a good way. All data is stored on servers in the People's Republic of China. Under China's 2017 National Intelligence Law, DeepSeek is legally required to cooperate with Chinese intelligence agencies if requested. There is no legal mechanism for the company to resist such requests, unlike Western companies that can challenge government demands in independent courts. Security researchers discovered hidden code in DeepSeek's web platform linking to China Mobile's authentication registry. Italy banned DeepSeek. The Netherlands, Australia, Taiwan, South Korea, the Czech Republic, and multiple U.S. federal agencies have restricted or banned its use on government devices. Investigations are active in at least 13 European jurisdictions. DeepSeek's privacy policy acknowledges collecting keystroke patterns, IP addresses, device identifiers, and uploaded files. For any professional handling sensitive data, using DeepSeek's hosted service is a risk that most compliance officers would reject outright.

The open-source alternative deserves a mention: you can run DeepSeek's model weights locally using tools like LM Studio, which eliminates the data-transfer concern. But embedded censorship patterns persist in the weights regardless of where you host them, and the security vulnerabilities documented by multiple research teams remain intrinsic to the model.

Winner: Anthropic for the strongest privacy-first positioning. OpenAI Business/Enterprise for corporate compliance. Google for Workspace integration with data protection. DeepSeek is last by a wide margin on privacy and should not be used with sensitive data unless self-hosted.

Who Should Use Which Tool: The Honest Verdict

If You Are a Writer, Editor, or Content Professional

Use Claude Pro at $20/month. The writing quality is the best of the four, the tone matching is superior, and the outputs require the least editing. Supplement with GPT-5.4 for structured content like product descriptions and email campaigns. If your content workflow is entirely inside Google Docs, Gemini Pro at $19.99 is the most friction-free choice, even though the writing is a tier below Claude and ChatGPT.

If You Are a Software Developer

Claude Opus 4.6, accessed through Claude Code, is the first choice for most coding workflows. The code quality, documentation, and refactoring ability are the best available. If you work heavily in DevOps, infrastructure, or terminal-based workflows, GPT-5.4 with Codex has a meaningful edge. Gemini 3.1 Pro is the best budget option for teams that need frontier-class coding at lower cost. DeepSeek V3.2 is viable as a cost-optimized secondary model for high-volume, non-critical tasks, but do not rely on it as your primary tool due to API reliability issues.

If You Are a Researcher or Analyst

This depends on what kind of research. For scientific reasoning and massive document analysis, Gemini 3.1 Pro's combination of strong GPQA scores and a 2-million-token context window is unmatched. For nuanced qualitative analysis, legal reasoning, or tasks requiring careful judgment, Claude Opus 4.6 with extended thinking is the strongest option. For quantitative analysis and data modeling, GPT-5.4 offers the most systematic approach.

If You Are Budget-Conscious

If you cannot spend anything, DeepSeek's free web chat is the most capable zero-cost option, but read the privacy section above carefully. Google's free Gemini tier is the safest free option. If you can spend $20/month, both ChatGPT Plus and Claude Pro offer enormous value, and the choice comes down to whether you prefer breadth (ChatGPT) or depth (Claude).

If You Work With Sensitive or Regulated Data

Do not use DeepSeek's hosted service. Choose between Anthropic Enterprise, ChatGPT Business/Enterprise, or Google Workspace Enterprise depending on your existing infrastructure. Anthropic's privacy-first positioning and compliance stack make it the strongest default for regulated industries.

If You Need a Single Tool and Cannot Manage Multiple Subscriptions

ChatGPT Plus at $20/month offers the widest range of capabilities in a single subscription: strong writing, strong coding, image generation, computer use, Deep Research, and web browsing. No other single product covers that much ground. Claude is better at writing and coding but lacks image generation. Gemini is better at multimodal input but weaker at writing. ChatGPT Plus is the jack-of-all-trades that is genuinely good at most of them.

There is no single best AI tool in April 2026. There is only the best tool for your specific needs, your specific budget, and your specific tolerance for risk. Anyone who tells you otherwise is selling you something.

Talia Emily Rogic is the Technology & AI Editor at Novaranews.com. She has been testing AI tools professionally since 2023 and holds no financial positions in any of the companies discussed in this article.