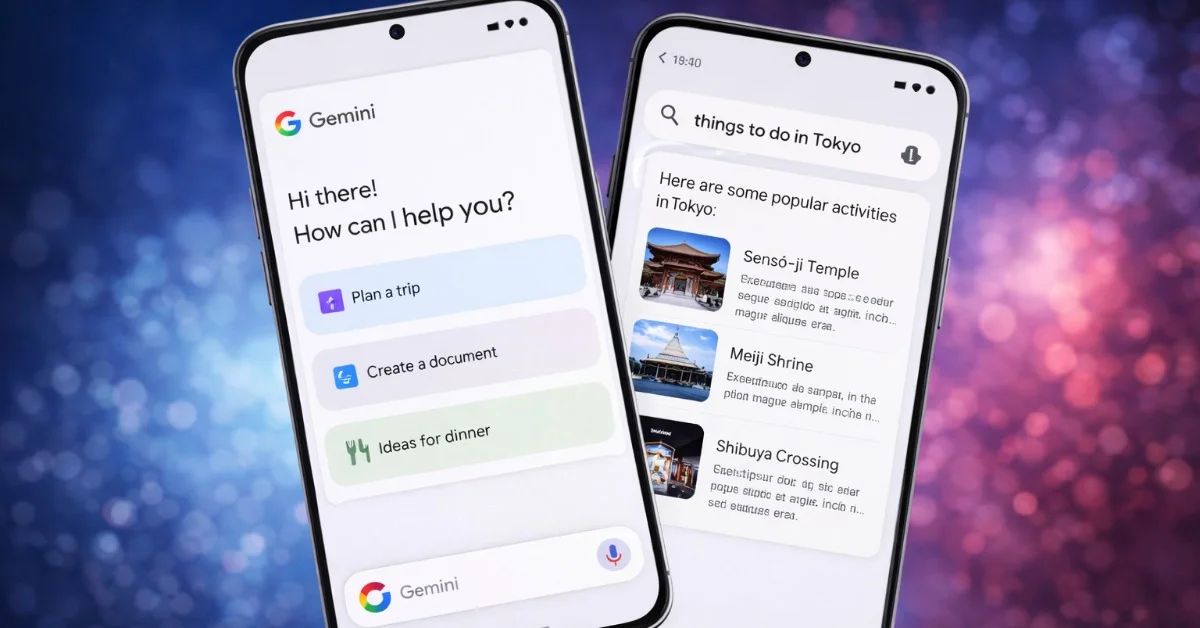

Google used the opening day of Cloud Next in Las Vegas on April 22 to make a clear argument about where its enterprise business is headed: not toward another round of chatbot demos, but toward AI agents that can be built, governed and run inside large organisations. The company said it is launching Gemini Enterprise Agent Platform, folding core Vertex AI capabilities into a broader enterprise stack and pairing that software push with new governance features and new eighth-generation TPU systems. That matters now because Google is trying to prove that its AI spending can turn into repeatable cloud revenue at a moment when enterprise customers have become the industry’s most dependable buyers.

Google recasts Vertex AI as part of a broader agent platform

Thomas Kurian used the conference keynote to argue that the market has moved past experimentation. Reuters reported that he told attendees “the experimental phase is behind us,” while Google said Gemini Enterprise Agent Platform combines the model selection, model building and tuning services of Vertex AI with new tools for agent integration, security, DevOps and orchestration. In the same announcement, Google said Gemini Enterprise recorded 40% quarter-over-quarter growth in paid monthly active users in the first quarter, a figure meant to show that the company is finding traction with paying customers rather than just developers testing features.

Google’s own description of the platform also shows how broad the company wants the enterprise pitch to be. It said the service gives customers access to Google models including Gemini 3.1 Pro, Gemini 3.1 Flash Image and Lyria 3, while also supporting Anthropic models, including newly added support for Claude Opus 4.7. That is an important distinction in a market where cloud buyers do not want to rebuild systems around a single model vendor. Instead of insisting on one stack, Google is selling a managed environment in which companies can build and operate agents while still choosing among models.

New TPU systems underline the infrastructure bet behind the software pitch

The software announcement was backed by hardware details that help explain Google’s strategy. Reuters said the company unveiled two new custom tensor processing units, TPU 8t and TPU 8i, on Wednesday. Google’s infrastructure blog described TPU 8t as a training-focused system delivering nearly three times the compute performance of previous generations, with 9,600 chips in a single superpod providing 121 exaflops of compute and two petabytes of shared memory. For inference, Google said TPU 8i is tuned for low-latency agent workflows and delivers 80% better performance per dollar than the prior generation. Those are not side notes: they show Google trying to sell enterprises both the tools to build agents and the infrastructure to run them at scale.

That dual message sharpens Google’s competitive position. Reuters noted that while rivals such as OpenAI and Anthropic have pushed coding tools and plug-ins, Google kept coding largely out of the spotlight and treated agents, governance and enterprise deployment as the main battleground. The contrast is revealing. Google is not presenting Cloud Next as a showcase for individual AI features; it is presenting the cloud business as the operating layer for companies that want agents to handle workflows, use enterprise data and meet oversight requirements. In other words, the pitch is less about a model answering better prompts and more about a company being willing to run business processes on top of it.

The next test will be whether that message turns conference momentum into broader adoption. Reuters reported that some of Google’s coding announcements are being held for the company’s I/O developer conference in May, suggesting Cloud Next was deliberately aimed at enterprise buyers rather than the wider developer audience. For now, the practical takeaway is that Google used one of its biggest cloud events of the year to tie together branding, agent software, governance controls and custom silicon in a single story about monetising AI. That is a more concrete signal than another model ranking: it shows where Google believes enterprise spending is moving, and where it wants the next phase of cloud competition to be fought.