As of April 2026, a detailed technical demonstration published on DEV Community shows Google's Gemma 4 multimodal model running as a complete Vision-Language-Action system on an NVIDIA Jetson Orin Nano Super with just 8 gigabytes of unified memory—listening through a microphone, reasoning over the user's request, deciding on its own whether a camera frame is needed, then answering aloud through a speaker. The entire inference chain never leaves the device.

Why the camera decision is the whole point

Most local voice assistants that add visual input do so clumsily: either the camera fires on every query, burning through compute and token budget on frames that add nothing, or the system waits for an explicit phrase like "look at the webcam" before capturing anything. Both approaches offload the decision-making to the user or to brittle keyword logic. The Gemma 4 setup described here does neither. The model is given a single callable tool—look_and_answer—and determines through native tool calling, enabled via the --jinja flag in llama-server, whether the current question actually requires visual context. A query about the weather does not trigger the camera. "What's on the desk in front of me?" does. The distinction sounds small; architecturally, it is the difference between a voice interface bolted onto a vision model and a genuine agent with a minimal but real action space.

The pipeline itself is straightforward in structure, if demanding in tuning. Speech reaches the board through a microphone, is transcribed by the Parakeet speech-to-text model, passed as text to Gemma 4 running via llama-server, optionally routed through the look_and_answer tool to capture a webcam frame, and finally converted back to audio by Kokoro ONNX for playback. Each layer—STT, LLM/VLM, TTS—is independently replaceable, which matters enormously for debugging on hardware where a failure anywhere in the I/O chain can look identical to a model problem.

Three components that must all be present for multimodal inference

The backend requires three things to function correctly: the quantized Gemma 4 GGUF model file (gemma-4-E2B-it-Q4_K_M.gguf), the vision projector (mmproj-gemma4-e2b-f16.gguf), and the --jinja flag at server startup. Remove any one of them and the system degrades in a specific, non-obvious way—no mmproj means the image embedding path simply does not work, while omitting --jinja breaks the chat template handling that native tool calling depends on. The demo's author is precise about this: the three components are not optional extras but load-bearing parts of the architecture.

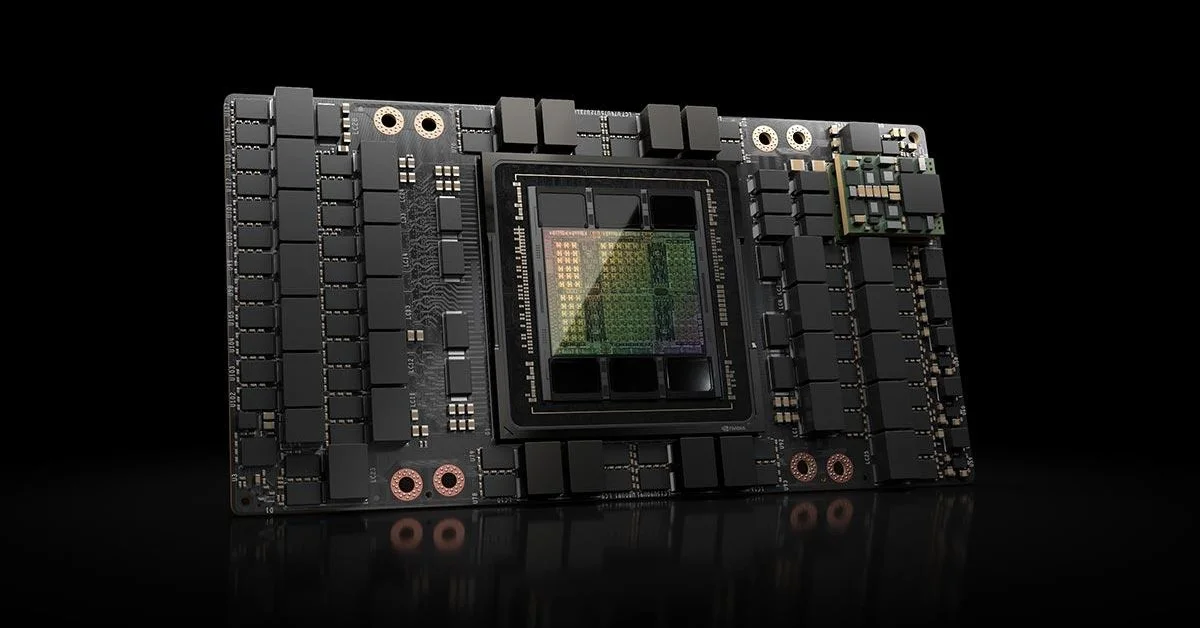

For the CUDA build targeting the Jetson Orin's GPU, the compilation sets DCMAKE_CUDA_ARCHITECTURES="87", matching the Ampere-derived compute capability of the Orin SoC, alongside GGML_CUDA=ON and -ngl 99 at runtime to push all layers to the GPU. Flash attention is enabled. The context window is held at 2,048 tokens, and image token count is fixed at 70 on both ends (--image-min-tokens 70 --image-max-tokens 70)—a deliberate constraint that keeps memory pressure predictable rather than letting vision input expand the working footprint unpredictably.

Memory budgeting as a first-class engineering concern

Eight gigabytes sounds generous until you account for the OS, audio daemons, V4L2 camera stack, and whatever else the board is running. The demo's approach to this is methodical: create an 8GB swapfile before loading the model, stop Docker and containerd if they are running, kill background indexing processes, and avoid running a browser or IDE concurrently. This is edge engineering in the unglamorous but necessary sense—the AI model is not the only tenant on the system, and it behaves differently depending on what else is competing for memory.

On quantization, the recommendation is Q4_K_M as the primary choice, balancing reasoning quality against memory cost. Q4_K_S suits a Docker text-only deployment. Q3_K_M is the explicit fallback when the board runs out of headroom—lower quality, but the difference between a working system and an out-of-memory crash. The author notes that Gemma 4 at these quantization levels has a practical advantage over heavier models: it actually fits and runs inference on real hardware, not just in benchmarks conducted on server-class machines.

What the demo ultimately demonstrates is less about Gemma 4 specifically and more about where edge inference is heading. The toolchain here—llama.cpp, ONNX-based TTS, open speech recognition, a commodity webcam—is entirely open and available today. The constraint is not capability but configuration discipline: knowing which flags matter, which background processes to kill, and why a missing projector file produces a subtly broken system rather than an obvious error. That kind of knowledge is what separates a demo that runs from one that runs reliably, and the Jetson Orin Nano is now capable hardware for both.